SUPPLY CHAIN MANAGEMENT PLAR

ONLINE ASSESSMENT PLATFORM

UX Research | Service Design | Research Coordination (2023–2025)

NorQuest College, Faculty of Research and Academic Innovation

Description: NorQuest College partnered with Supply Chain Canada to launch a Government of Alberta–funded pilot supporting internationally trained newcomers to validate their supply chain experience through a digital Prior Learning Assessment & Recognition (PLAR) platform.

I joined a lean, high-impact team as UX Researcher and Research Coordinator, leading mixed-method research, usability evaluation, and service design across a two-year pilot

Overview

Challenge: Despite strong employment opportunities in Alberta’s supply chain industry, skilled newcomers are underrepresented in this sector, facing unemployment and underemployment.

Solution: Developed targeted workforce integration interventions and supports: digital PLAR assessment platform to validate existing supply chain knowledge, skills, and training paired with career coaching, industry membership, and work placements.

My Role

UX Researcher & Research Coordinator

(With significant service design and content development)

Designed qualitative and quantitative research tools (surveys, interview protocols, usability scripts)

Recruited 68% of all program participants into the research study

Led moderated usability testing (remote + proctored)

Conducted behavioural observation during live assessments

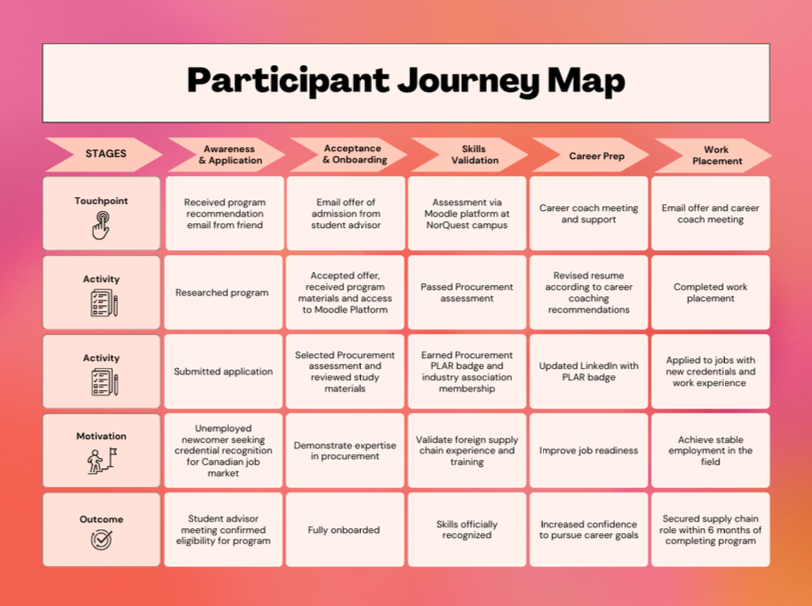

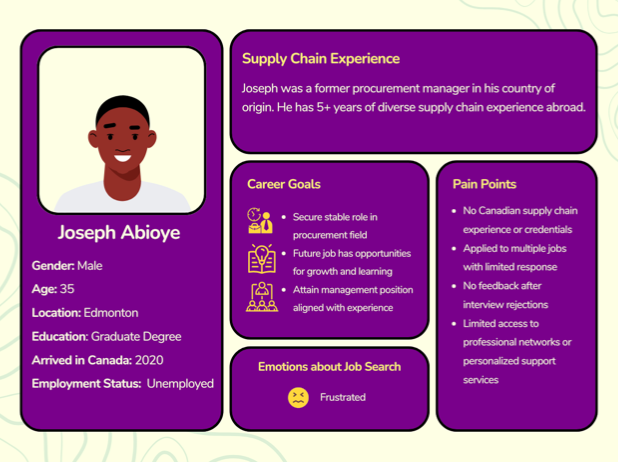

Developed participant personas and a full service journey map

Evaluated accessibility and cognitive load

Synthesized findings into usability and academic reports (forthcoming)

Wrote and secured Research Ethics Board (REB) approval

Developed module content and animated assessment videos

Produced data visualizations and graphics for stakeholder reporting

Tools & Platforms

Research & Surveys: Qualtrics

Design & Prototyping: Figma, Canva

Content Development: Vyond

Learning Platform: Moodle

Workflow & Documentation: Microsoft 365

Research Design

Quantitative and Qualitative Research Methods/Evaluation

I designed a full evaluation framework integrating:

Semi-structured participant interviews

Moderated usability testing

Remote assessment behavioural observation (screen + camera via Teams)

Pre & post assessment surveys

Post-program survey

Assessment result data

Government intake forms and staff notes

Usability Methodology

Participants completed real assessment tasks (intro video + scenarios 1–3 covering all question types).

I documented:

Navigation friction

Behavioural cues (hesitation, cognitive strain, self-talk)

Accessibility barriers

Technical breakdowns

Post-task emotional response

Issues were coded by severity: Critical, Serious, Moderate, Minor, with actionable design recommendations.

Service Design

Beyond platform usability, I mapped the entire participant journey:

Impact

68% recruitment rate into research (exceptionally high engagement)

75% of participants passed at least one assessment, validating prior experience

92% program satisfaction

Accessibility improvements implemented (captions, visual clarity, UX refinements)

Technical errors resolved prior to scale

Assessment questions revised to reduce cognitive overload

Identified need for a condensed refresher course (simplified terminology + foundational concepts)

Recommendations adopted across platform and service touchpoints